TL;DR

Last week we published a field guide to the filesystem problems we hit deploying MuJoCo on Azure ML. It hit Hacker News. Microsoft's Azure team responded directly with a detailed technical explanation: we weren't on CIFS, we were on Blobfuse2; and Download Mode—staging data to local NVMe before execution—was the correct fix we missed.

The original article was honest about what hurt. This one is honest about what we missed. The repo is better for both.

What Happened

Last week we published Deploying MuJoCo on Azure ML: Surprising Pain Points — a field guide to the filesystem problems we hit trying to run MuJoCo on Azure ML. We at Haptic are working with a robotics AI research team to train robot control policies using a pipeline of VLA (Vision-Language-Action) models in simulation. Broken symlinks on CIFS, metadata latency killing training scripts, the storage pincer where neither local disk nor network storage was good enough on its own. We open-sourced simup to save the next person the debugging.

It hit Hacker News. People read it. Including, it turns out, people at Microsoft.

An Azure technician reached out directly with a detailed response explaining what we were actually running into, why the platform behaves the way it does, and (importantly) what we should have done instead.

This is the post where we tell you what they said, what it changes, and where we were wrong.

What Microsoft's Technician Actually Told Us

Four things, and they're all worth understanding if you're doing any serious compute on Azure ML.

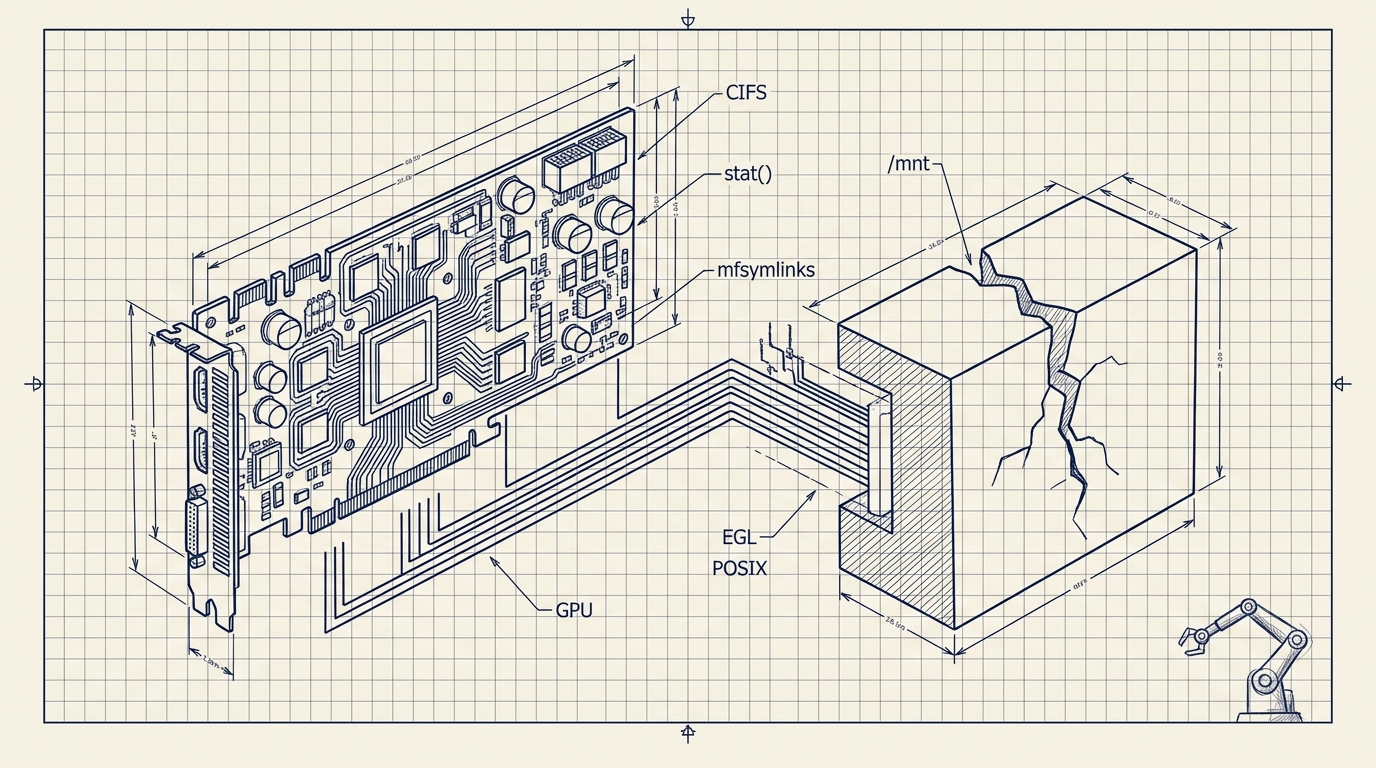

1. You're not on CIFS. You're on Blobfuse2 pretending to be a filesystem.

This was the big one. Azure ML's mount mode uses Blobfuse2 under the hood—a FUSE layer that translates filesystem operations into REST API calls against Azure Blob Storage. It is not a true POSIX filesystem. It looks like one. It has paths. You can ls it. But stat() is an HTTP request. Symlinks don't behave the way they would on ext4 or even NFS because the underlying storage has no concept of them.

Our original article described this as “CIFS/SMB breaking symlinks.” More precisely: the mount abstraction was translating filesystem semantics into something the backend doesn't natively support. The symptom was the same—symlinks silently failing, stat() costing seconds—but the root cause matters when you're choosing a fix.

2. Download Mode exists, and it's what we should have been using.

Azure ML has a feature called Download Mode. Instead of mounting remote storage and running your code against it (which is what we were doing), Download Mode stages data onto the VM's local NVMe before execution begins. The data lives on fast local disk. Symlinks work. stat() is native speed. Your training script sees a normal filesystem because it is a normal filesystem.

This is not the same as copying to the OS disk. The OS disk on these VMs is small—that was half the storage pincer we described. Download Mode targets the local NVMe/SSD, which is a different device with substantially more capacity and throughput.

The staging adds time before your job starts, but the job itself runs at local-disk speed instead of fighting a FUSE layer on every I/O call. For workloads like ours—thousands of parquet files, heavy enumeration at startup, symlink-dependent installs—this is a categorical improvement, not an incremental one.

3. Blob Storage or ADLS should be your source of truth, not Azure Files.

We had been using Azure Files as the backing store, which is SMB-based and optimized for lift-and-shift Windows workloads. For Linux HPC and ML workloads with high random I/O, the recommended path is Azure Blob Storage or Azure Data Lake Storage (ADLS). These back the Blobfuse2 layer more naturally and are what Download Mode is designed to pull from.

The practical upshot: if you're on Azure ML and your workload touches a lot of files, your storage architecture should be Blob or ADLS as the source of truth, with Download Mode staging to local NVMe for execution. Not Azure Files mounted as a pseudo-filesystem you run against directly.

4. On the NDm A100 cluster: local NVMe is fixed. Plan around it.

We're on Microsoft for Startups GPU allocation, which uses NDm A100 nodes. The local NVMe capacity on these is fixed—there's no “give me more local disk” option. Microsoft confirmed this. If your dataset exceeds what fits on local NVMe, the practical options are:

- Reduce what gets staged. Don't copy everything—selectively stage what the current job needs.

- Selective staging per job. Structure your pipeline so each job pulls only its slice.

- Attach managed disks. You can bolt on additional Azure Managed Disks for overflow, but they're slower than NVMe. You're trading speed for capacity.

There's no magic option here. But knowing the constraint is fixed rather than tunable changes how you architect around it.

What We Got Right, What We Missed

We got right: the core observation that Azure ML's default filesystem behavior is hostile to research code that assumes POSIX semantics. The pain was real. The symptoms were accurately described. The pre-flight checklist still helps.

We missed: the distinction between CIFS and Blobfuse2, and—more importantly—that Download Mode was available as a first-class solution. Our original fix was working around the mount's limitations (Premium tier, cache redirection, mfsymlinks). The correct fix was not running against the mount at all.

In retrospect, this is the kind of thing you miss when you're debugging from the symptom up rather than from the architecture down. We were GCP/AWS people dropped into Azure for the first time, and we reached for the tools we understood (mount options, storage tiers) rather than the tool Azure actually built for the problem.

Fair cop.

What This Changes in simup

We're implementing the Microsoft-recommended approach on a new branch: download-mode. It'll PR to main once tested.

Before (main branch, current)

startup_script.sh:

→ Mount Azure Files via CIFS/Blobfuse2

→ Add mfsymlinks to mount options

→ Redirect caches to /mnt

→ Hope that stat() latency is survivable

→ Run training directly against mounted storageAfter (download-mode branch)

startup_script.sh:

→ Configure Blob Storage / ADLS as data source

→ Use Download Mode to stage data to local NVMe

→ Symlinks work natively (it's a real filesystem now)

→ stat() is local-disk speed

→ Run training against local NVMe

→ No mount to fightThe specific changes are in startup_script.sh and azure_vm.py. The CLI (cli.py, config.py) stays the same—simup still gives you simup deploy and everything works the same from the user's perspective. The infrastructure underneath is just doing the right thing now instead of the hard thing.

What goes away

| Original workaround | Status in download-mode |

|---|---|

mfsymlinks in /etc/fstab | Unnecessary. Local NVMe supports real symlinks. |

| Premium-tier Azure Files migration | Unnecessary. Not running against Azure Files at all. |

Cache redirection to /mnt | Still useful for pip/uv/torch caches, but no longer load-bearing for correctness. |

UV_LINK_MODE=copy | Probably unnecessary. Hardlinks work on local disk. Worth testing before removing. |

What stays the same

The GPU/rendering setup—EGL installation, headless NVIDIA drivers, render group membership—is unchanged. That was never a storage problem. The pre-flight checklist items around GPU quota and driver versions still apply.

What the Setup Actually Looks Like

We've started onboarding a new client to this same H100 node, so we got to put Microsoft's advice into practice immediately. Here's what we learned about the practical reality of Download Mode that the documentation doesn't make obvious.

Your data lives in two places, not one. This is the actual mental model shift. Before, we had Azure Files and ran against it directly. Now you need a durable location (Azure Blob Storage) where the client uploads data and where results persist across VM restarts, and a fast location (local NVMe) where training actually runs. You manage the lifecycle between them.

Client uploads data → Blob Storage container (durable, slow)

↓ stage before training

Local NVMe (ephemeral, fast)

↓ train at full speed

GPU runs without I/O throttling

↓ sync after training

Blob Storage (checkpoints saved durably)The NVMe is ephemeral. This is the thing nobody warns you about. If your VM gets deallocated, everything on local NVMe is gone. Azure Files and Blob Storage survive. NVMe does not. If you train for twelve hours and your checkpoints are only on NVMe, a VM deallocation means you're starting over. Sync checkpoints back to Blob Storage periodically or at the end of every run.

Blob Storage, not Azure Files. Our first instinct was to create another Azure Files share for the new client. That would have put us right back where we started: SMB mounts, CIFS semantics, the same metadata latency. The correct move is a Blob Storage container. It's what Download Mode is designed to pull from, and it avoids the entire class of filesystem problems from Part 1.

Staging 200GB takes minutes, not hours. For our client's initial dataset, staging from Blob Storage to local NVMe is a one-time cost at the start of each job. For 200GB over Azure's internal network, this is fast. The training run itself then operates at full NVMe speed—no FUSE layer, no HTTP round-trips, no 3.3-second stat() calls. The tradeoff is obvious once you see it.

Plan for growth. Our client starts at 200GB but expects to scale to terabytes. The NVMe on this node is 3.5TB—fixed, non-expandable. As long as a single job's working set fits on NVMe, you stage just what that job needs. When datasets exceed NVMe capacity, you partition: each job pulls its slice. The durable store holds everything; the NVMe is a fast-access cache, not a warehouse.

The Updated Mental Model

If you're coming from GCP or AWS and landing on Azure ML for the first time, here's the version of the mental model I wish I'd had:

Don't treat Azure ML mounted storage as a filesystem. It's an API in a trenchcoat. It will nod politely when you use stat() and then charge you three seconds of latency per call. It will accept your symlink and give you back a regular file. It will not apologize.

Use Download Mode. Stage your data to local NVMe before execution. Let your training code see an actual POSIX filesystem. Pay the staging time upfront rather than paying the I/O tax on every operation.

Keep your source of truth in Blob Storage or ADLS. Not Azure Files. The whole pipeline flows better when the backing store matches what Download Mode expects.

Plan around fixed NVMe capacity on GPU nodes. If your dataset is larger than local NVMe, stage selectively per job. Don't try to stage everything.

Thank You

To the Hacker News community—thanks for reading, sharing, and for the conversations in the comments. A few people DM'd with their own Azure war stories, and several pointed us toward things we'd missed. That's the internet working the way it's supposed to.

To the Microsoft Azure team—thank you for responding directly and substantively. You could have ignored a blog post from a small startup complaining about your platform. Instead you sent an engineer who explained exactly what was happening and how to fix it. That's the right move and we appreciate it.

The original article was honest about what hurt. This one is honest about what we missed. The repo is better for both.

The download-mode branch is in progress at github.com/Haptic-AI/one-click-mujoco-azure. If you've used Download Mode for similar workloads and have notes on staging performance or NVMe capacity planning, we'd love to hear from you.

If you're deploying simulation workloads to cloud infrastructure and want help not repeating our mistakes, we can help with that.