TL;DR

- Festivus is an open source dataset that tells you which AI policies actually work on which robots for which tasks. 22,592 records and counting.

- Data available via API. Built for agents first (we expect them to do 99% of reads and edits), but humans can jump in too. Mistakes are easy to revert.

- More coming soon: data on GitHub, deeper compatibility checks, and tighter ties to One-Click Deploy.

Two years ago, if you asked the internet “what robot can fold laundry,” you got a Reddit thread, three vendor demos, and a 2019 paper that doesn't run on your hardware. The data lived in HuggingFace pulls, arXiv PDFs, manufacturer spec sheets, and Discord screenshots from a guy named Kevin. Nobody had counted.

So we did.

1. Inspiration

Two days ago, Diego laid out four open pains in the “Hugging Face for robotics” meme. Festivus is a seed, a germ, of an open dataset that addresses directly the first pain point: you can't tell which models actually work with your robot and in which environment and which tasks.

“Many Christmases ago, I went to buy a doll for my son. I reached for the last one they had, but so did another man. As I rained blows upon him, I realized there had to be another way.”

“What happened to the doll?”

“It was destroyed. But out of that, a new holiday was born. A Festivus... for the rest of us.”

— Seinfeld, “The Strike”

Festivus embodies the dumb + simple idea of making open source physical AI dumb + simple for “the rest of us”. The beginners, the new players who just want to see what works with what. I want a robot to do X in Y environments... what policies have been tried? Which work best?

The design intent of Festivus:

- Built for agents. It has to be easy for agents to use the data, build with it, or contribute/edit it. We are assuming agents are 99% of the readers and contributors.

- A kernel to build from. Festivus is deliberately small. It is far, far from complete, but we wanted to open earlier rather than later. We'll share some of what we're building on top of it soon.

- A dataset, not a product. We don't have a hot take on AGI. We have JSON. We deliberately wanted the kernel to be dumb and simple text that folks can see, edit, correct, and bless.

- A higher layer, not a replacement. Festivus does not compete with HuggingFace, GitHub, or the academic ecosystem. It reorganizes and connects the primitives that already live there, so they're more useful for physical AI.

- A lightning rod. Somewhere to collaborate, share, nitpick, criticize, improve. We'd rather be wrong in public than right in private.

- Non-zero sum. Festivus has to help, not extract from other networks. Papers stay on arXiv, code on GitHub, models and datasets on HuggingFace, and so on.

2. Prior art

Festivus isn't the first attempt at organizing this corner of the field. A few projects we owe credit to, and that we'd point you at if Festivus doesn't have what you need:

- HuggingFace LeRobot — the canonical home for open robotics datasets and pretrained policies. Festivus links to LeRobot artifacts; we don't re-host them.

- Humanoids.fyi — a community directory of humanoid robots with specs you can compare side-by-side. One record per robot, done right.

- IEEE Robots Guide — IEEE Spectrum's long-running consumer-facing catalog of ~200 robots, with photos, videos, and ratings.

- Open X-Embodiment / RT-X — the cross-institution dataset combining 1M+ trajectories across 22+ robot embodiments. The clearest precedent for indexing across embodiments at all.

- MuJoCo Menagerie — DeepMind's gold-standard collection of clean MJCF models for commercial and research robots. If you're working in MuJoCo, start here.

- robot_descriptions.py — a Python package giving one-line access to ~70 URDF and MJCF models from across the open ecosystem.

- awesome-robotics — the canonical hand-curated reading list for the field. If we missed something major, it's probably already on this list.

- RLBench, ManiSkill, robosuite — benchmark suites that ship curated bundles of (robot × task × environment). Each one is a kind of catalog inside its own framework.

If you're building one we should know about — or one we forgot — tell us. We'd rather link to it than pretend it doesn't exist.

3. What Festivus is

A catalog tells you what exists. A map tells you what works with what.

Festivus is both. Pick a task (“fold a t-shirt”). Pick a robot (“Unitree G1”). Festivus tells you which policies have been trained for that combination, which benchmarks measure it, and which datasets you can train on. The raw pieces already live on HuggingFace, GitHub, arXiv, and a hundred manufacturer pages. You can think of Festivus as the join of these. Which sounds easy, but it also means there is a combinatorial explosion and gaps (which we make obvious for people to fill out).

Beginners don't need a search engine that returns “12,409 results.” They need an answer. Festivus is the search engine that read the 12,409 results and came back with one row: what fits your robot, what works for your task, where to download it. The other 12,408 are fine. They're just not the answer to your question.

And once you have that row, Festivus is wired into our One-Click Policy Deploy: paste the policy URL, get a 30-second video of the robot moving in sim about 90 seconds later. The full mechanics are in the One-Click write-up.

4. The agent is a first-class contributor

Paste this to your agent: “Go to festivus.hapticlabs.ai/llm and pick up the latest data on policies that run on a 7-DOF arm.” It will. The OpenAPI 3 spec at api.festivus.hapticlabs.ai/v1/openapi.json describes every endpoint, parameter, and Pydantic-validated schema. Drop it into your agent's system prompt.

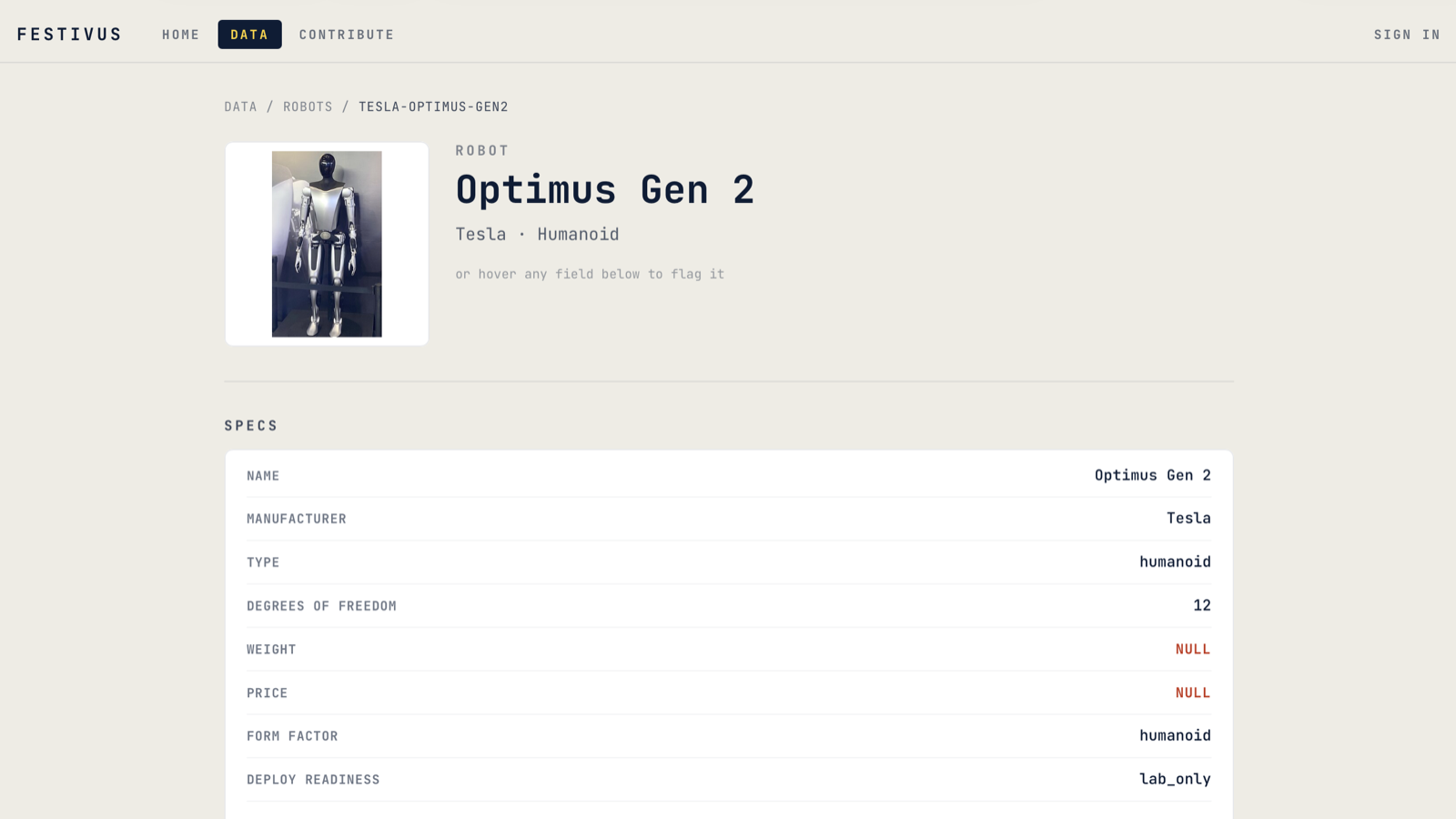

Editing with Claude. The flag is the door. Click it on any value you don't trust — a NULL weight, a missing actuator, a stale price — and a chat panel opens. Type what should change. The agent reads the schema, proposes a diff, asks you to confirm. You click UPDATE. The mutation goes live. A moderator can revert it.

No issue tracker. No queue. See it, flag it, fix it.

Here's the loop end to end, on a real record (Tesla Optimus Gen 2, missing weight):

1 / 7

1 / 7For bulk work, you want a script. Adding a sensor type to a hundred records used to be a week of pull requests. With the Claude Agent SDK pointed at the eleven /v1/write/{table}/{slug} endpoints, it's a five-minute prompt. Every mutation has a revertable handle.

As far as we can tell, this is the first open robotics dataset where an AI agent can contribute on equal footing with a human: a real read-write surface, the same safety net the in-app flow uses. The agent is a contributor, not an admin. But the contributor never sleeps.

5. Where we are today: small on purpose

| Domain | Records |

|---|---|

| Datasets | 9,962 |

| Policies | 9,296 |

| Benchmarks | 3,228 |

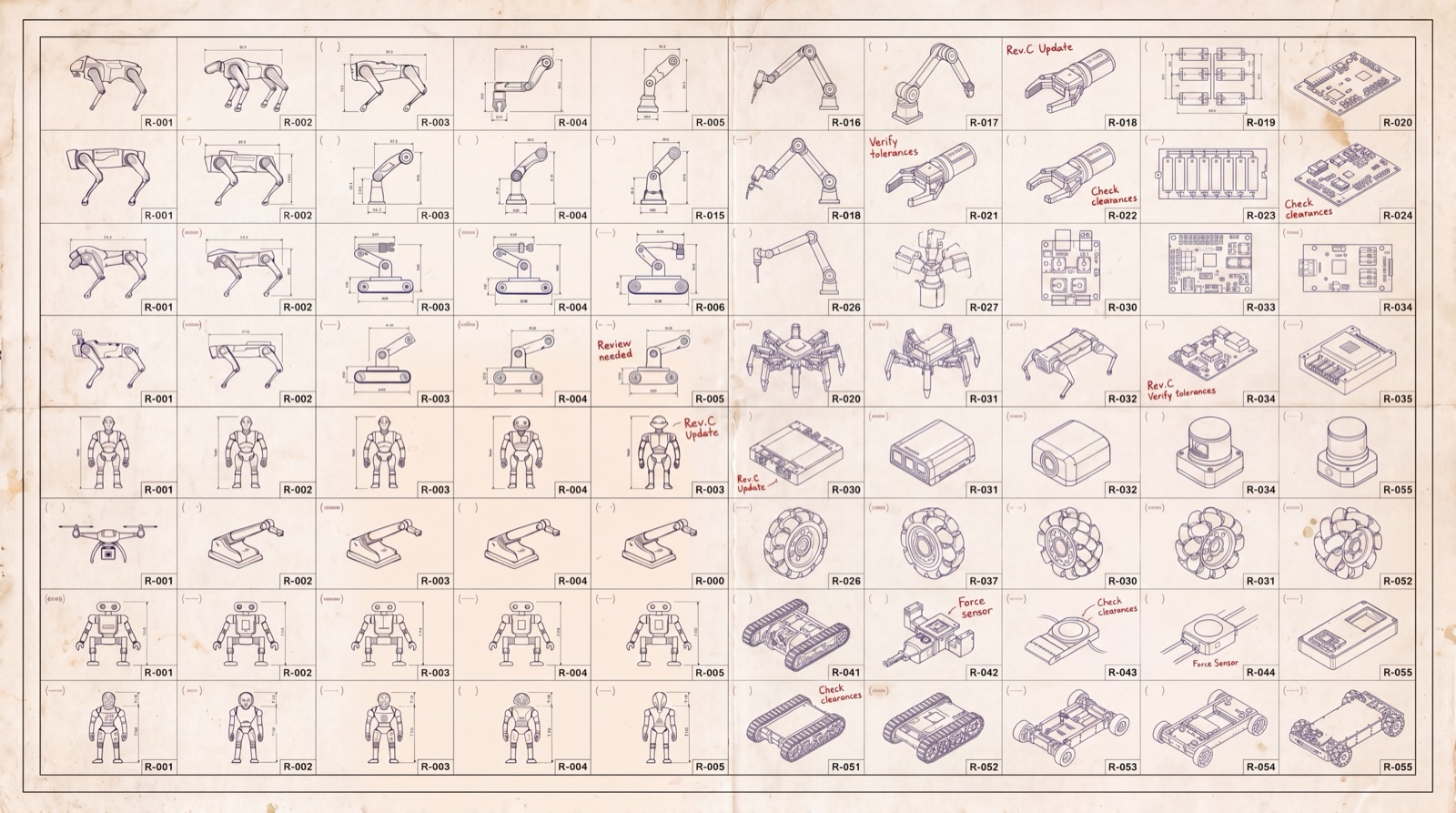

| Robots | 60 |

| Deploy notes | 35 |

| Tasks | 10 |

| Environments | 1 |

| Papers | 0 |

Hit /v1/stats and you get JSON with the live count for every domain, straight from the database. The schema is Pydantic. The tests pass. No dashboard built on a dashboard, no PDF behind a sales call.

Why sixty robots? We started small on purpose. In practice, there are thousands, but we need a solid base to start from. And even these should be further verified against manufacturer specs and actual usage of who has tried what policies on what tasks or environments.

Today every robot in Festivus has policies, datasets, and benchmarks attached. That's a connected graph, not a complete one. Connected means you can traverse it; complete will mean every (task × robot × policy) cell that should have an entry does.

A connected graph today. A correct graph next.

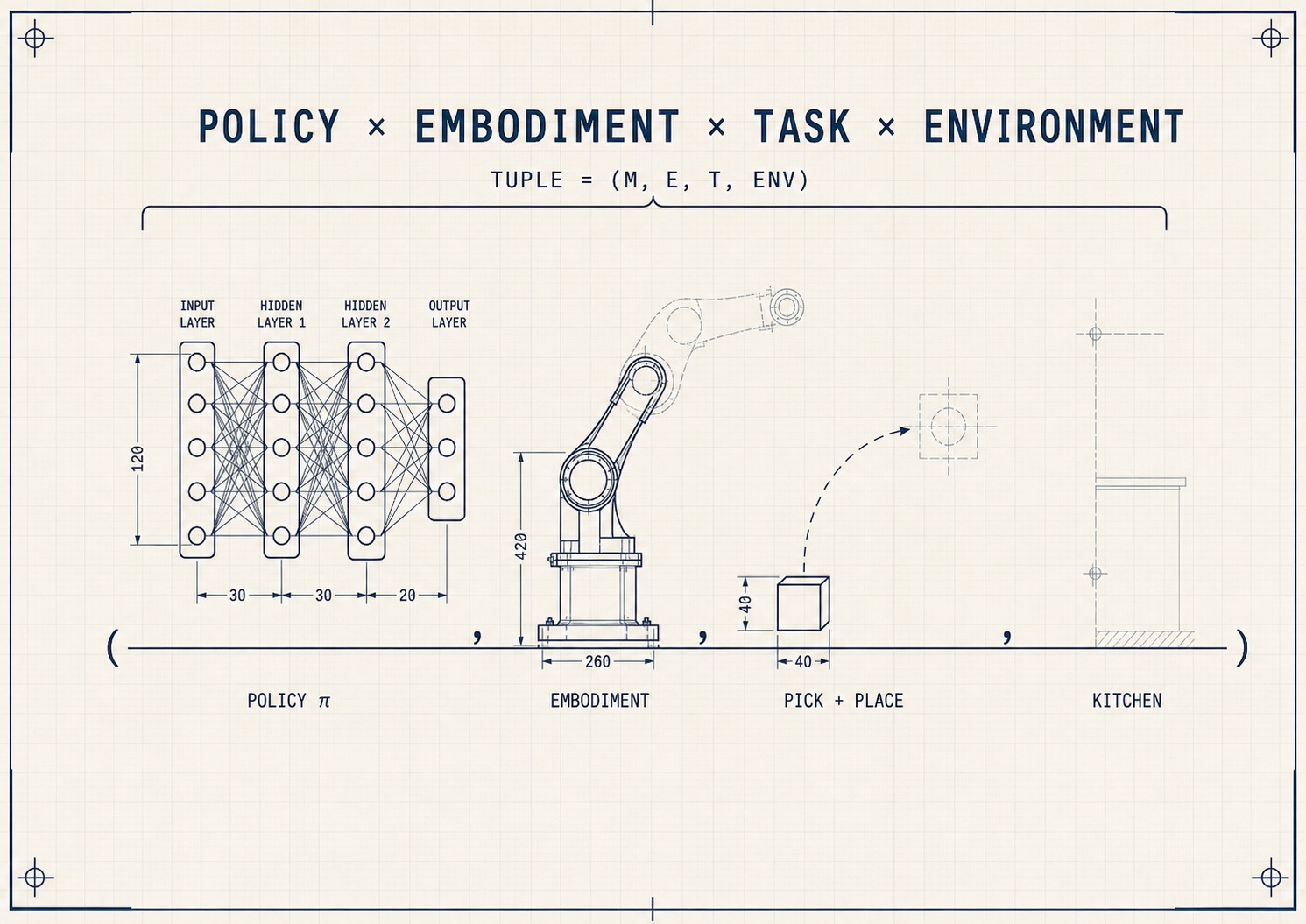

6. How we match policies to robots today

You're browsing policy listings on HuggingFace. One says “humanoid with two arms.” Will it run on your robot? Today Festivus checks six features:

- Form factor — humanoid, quadruped, arm, drone, mobile base

- Degrees of freedom — number of independently controlled joints

- Actuators — parallel jaw, suction, dexterous hand, none

- Sensors — RGB, depth, LiDAR, force/torque

- Deploy readiness — lab, warehouse, home, sim-only

- Weight — kilograms; matters for safety zones, payload, and whether your tabletop arm can lift the part

These six rule out the obviously-impossible: a quadruped policy on a robot arm, a force-feedback policy on a robot with no force sensors. They don't yet prove a policy will run.

We also publish honest hit rates from the Deploy loop, where “hit” means the policy compiled, ran, and produced a video without crashing: ~90% on Stable-Baselines3 with MuJoCo, ~70% on Stable-Baselines3 with non-MuJoCo backends, ~20–30% on CleanRL and Sample Factory. These are runs-without-crashing numbers, not task-success numbers. Task success requires a benchmark; the deploy loop just gets the policy off the page and onto a simulator.

7. What's next

The full compatibility check needs the contracts the SmolVLA-on-Franka user got burned by:

- Action space — exactly what commands the policy emits

- Observation space — exactly what it reads, including camera names and resolutions

- Joint limits — per-joint min/max, hard and soft

- Control rate — Hz the policy expects to run at

- Physics — friction, contact, inertial parameters from the robot description

Adding these to every record is the roadmap. The destination is a graph where “compatible” means compatible — where Festivus tells you a policy will run on your robot, and it does. A connected graph today, a correct graph next.

The thin columns in the table above are the other shape of “what's next,” and each one maps to one of Diego's other pains:

- Papers and benchmarks are sparse because pain #1 — model cards with real hardware results — needs to exist before paper-to-policy links mean anything.

- Environments is at one because pain #3 — sim configs as first-class artifacts — is its own platform problem.

- Robots is at sixty because pain #2 — orphaned robot descriptions — is the bottleneck. Adding a robot means verifying its URDF, its dynamics, its joint limits. We do that by hand. We don't fake it.

When an agent queries Festivus and the answer isn't there, it says so and points at the empty record. Refusing to hallucinate matters because agents downstream depend on Festivus; a fabricated answer poisons whatever workflow consumed it.

The benchmark layer is what closes the deploy loop end to end. That's on the roadmap too.

8. What's open today

“Open” gets weaponized, so let's be precise about where things actually stand:

- The API is open. Read and edit endpoints, public, no auth. Anyone or any agent can pull or contribute. CC-BY 4.0, just credit us.

- The frontend is open. Public repo on GitHub.

- The backend is open. Public repo on GitHub.

- The data itself is not yet a repo. We want it sitting as YAML or JSON on GitHub (and mirrored to HuggingFace) so you can clone, diff, and PR it like any other dataset. It's not there yet because the data is moving fast through the API right now, and we'd rather fix the schema bugs before freezing a file layout we'd have to break. Coming soon.

The last stretch is making sure edits from humans (PRs on GitHub, once the data lands there) and agents (hitting /v1/write today) don't drift into two different versions of the truth. Same checks, same moderator queue, same fresh data for everyone.

Got opinions on what we should lock down first? Book time with the team.

We'd rather physical AI ship than be the only ones holding the keys.

9. Your turn

Most of what's known about getting a specific robot to do a specific task lives in the heads of people who've done it. Festivus is where they write it down.

If you're a roboticist: sign in, find a missing weight, a wrong actuator, a benchmark we forgot. The dataset gets better the more of you show up.

If you're new to physical AI: this is the map. Start at a task, follow it to a robot, follow that to a policy, hit deploy. Tell us what broke.

If you're building a different swing at one of Diego's other three pains: we want to hear about it. We don't want to own the answer; we want the answer to exist.

The longer version of how we think about this — what counts as a record, how we resolve conflicts, what we won't merge — lives in Festivus: Design Principles. Read it. Tell us where it's wrong.

22,592 records. Counted. Linked. Open.