TL;DR

While working with Georgia Tech researchers on robot simulation, a question kept nagging: why isn't there a Midjourney for physics simulation? So we dove in, built one, and deployed it. This post covers the prior art, how it works, and what we learned.

As part of building in public, you can play with the app or clone the repo.

Where Is the Midjourney for Simulation?

We at Haptic are working with AI researchers at Georgia Tech to train robot control policies using a pipeline of VLA (Vision-Language-Action) models in simulation. While deploying MuJoCo on cloud GPUs, wrangling physics engines, and watching robots learn to move, a question kept coming up.

Working in forward deployment with robotics researchers, I watched brilliant people spend days configuring environments just to test a hypothesis about how a robot might walk. The simulation itself, the interesting part, the reason anyone got into this field, was buried under layers of XML authoring, parameter tuning, and rendering pipelines.

While fighting the sims, maybe because as Diego says I am a software-first person, I had less patience and I asked myself “Where is the Midjourney for simulations?”

The rest of the creative internet is already somewhere else entirely. You can type “astronaut riding a horse on Mars” into Midjourney and get four photorealistic images in seconds. You can describe a video to Runway and watch it render. The prompt-to-creation pattern has transformed every visual medium.

Every visual medium except the one grounded in actual physics.

I want to describe a scene and have a physics-real simulation I could use so I do not have to learn the complex simulation softwares, not some video-generation.

Human Speak → Real physics engine simulation (MuJoCo, Isaac Sim, Unreal, etc.)

This felt like an obvious question. Which, in my experience, means either nobody's thought of it or everyone's thought of it and moved on for reasons that are hard to argue with (intractable scope, unclear business model, the physics alone being a multi-year research problem). I chose to find out which.

Prior Art

Well, lots of smart folks DID think of it. And it was exciting seeing their work, so worth sharing as they inspired us.

The closest neighbors — LLMs generating real physics simulations:

- Genesis — The most ambitious effort in the room. A 20+ lab collaboration pairing a blazing-fast Python physics engine with a generative pipeline aimed at turning prompts into 4D worlds, scenes, tasks, and policies. Targets researchers with a Python API; we're targeting creators with a canvas.

- Lucky Robots — A “game engine for robotics” backed by Draper Associates, building toward natural-language robot interaction on a custom engine with some MuJoCo internals. Same democratization thesis, different surface area.

- RoboGen (CMU/MIT) — GPT-4 proposes a task, generates the simulation scene from PartNet-Mobility and Objaverse objects, decomposes sub-tasks, and writes the reward function. The full propose-generate-learn loop, end to end. A direct precursor to Genesis.

- Holodeck (UPenn/Stanford/AI2) — Prompt in (“apartment for a researcher with a cat”), full interactive 3D environment out, in AI2-THOR. GPT-4 handles floor plans, materials, object selection from 51K Objaverse assets, and spatial constraints. Built on a game engine rather than MuJoCo, but it's the same loop.

- RobotDesignGPT — VLMs generating valid MJCF for robot bodies from text and image input. Scoped to robot bodies rather than full scenes, but it's the narrow technical bet SimGen is built on: LLMs can write MJCF.

- Gemini + MuJoCo WASM (Google AI tutorial, 2025) — Gemini generates MuJoCo simulation code that runs via WebAssembly in the browser. A tutorial rather than a product, but the exact pattern: prompt → MuJoCo code → simulation.

Adjacent work that shaped how we think about this. A wave of 2023–2024 research established that LLMs can generate the components of a simulation, even if not the simulation itself:

- GenSim and GenSim2 (MIT CSAIL) — Generate robot task code for tabletop manipulation in PyBullet and SAPIEN.

- Eureka and DrEureka (NVIDIA) — Write reward functions that beat human experts on 83% of tasks; teach a Shadow Hand to spin a pen.

- Text2Reward — Same idea with grounded code over a structured environment representation.

- Language to Rewards (DeepMind) — The closest spiritual ancestor. Tune MuJoCo MPC behavior interactively through language. Exactly the loop we want, just pointed at researchers tuning controllers instead of creators making videos.

- Prompt2Walk (UC Berkeley) — Few-shot LLM prompting outputs target joint positions for quadruped locomotion in MuJoCo. The most literal “prompt a robot” work, but it controls a fixed sim rather than generating one.

- Infinigen (Princeton) — Procedural scenes that now export directly to MJCF and USD. The missing piece is a natural-language front door.

A naming note:

- SimGen for autonomous driving (UCLA/Fudan, 2024) — Generates driving scene videos.

- SiMGen for molecular generation (Cambridge) — Zero-shot molecule generation.

Different projects, different problems, same three letters. We'll figure out the name situation.

What SimGen Is

We studied these projects, but that was not enough for us to feel we grokked the domain. So while working with Georgia Tech, we got our hands dirty and built SimGen.

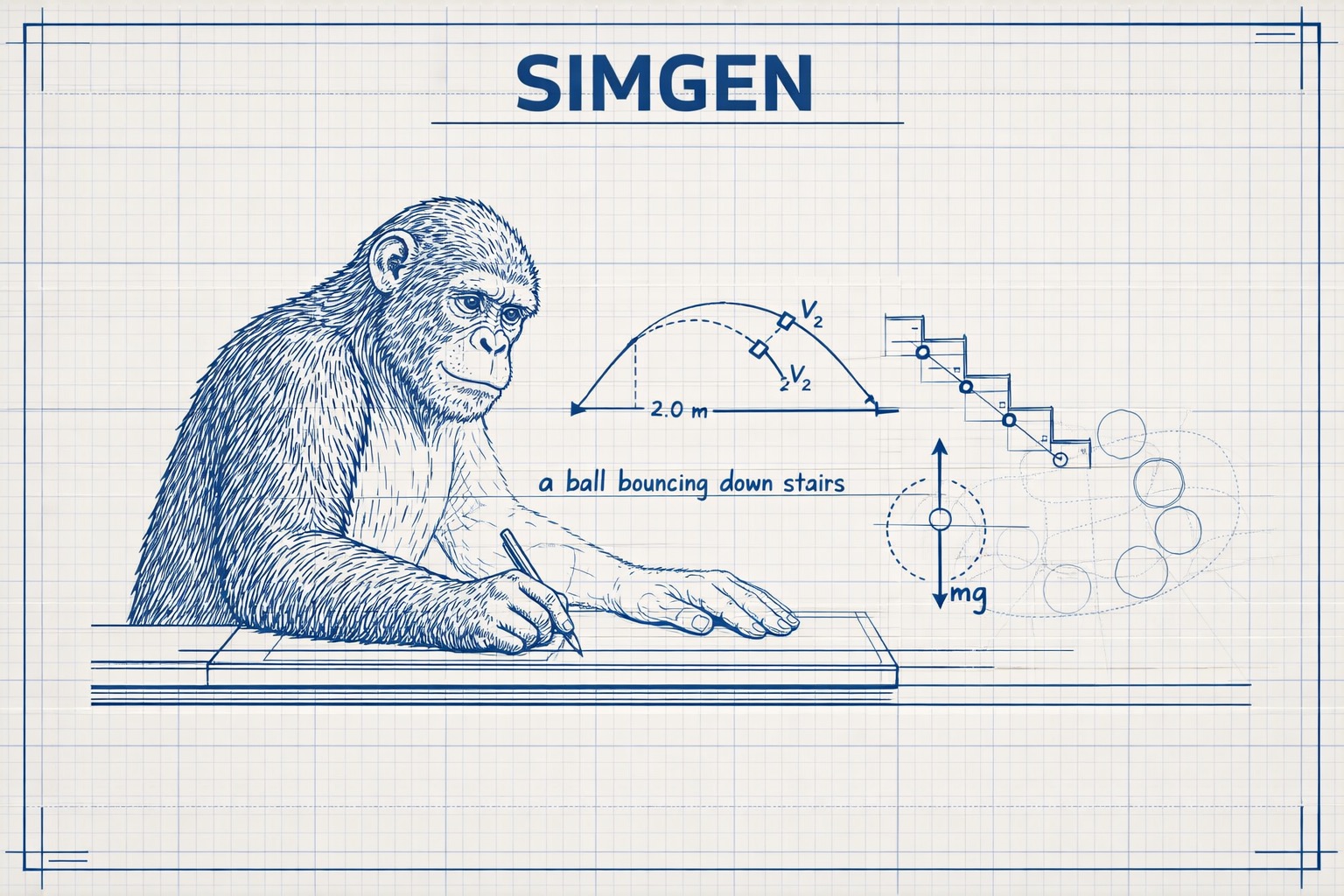

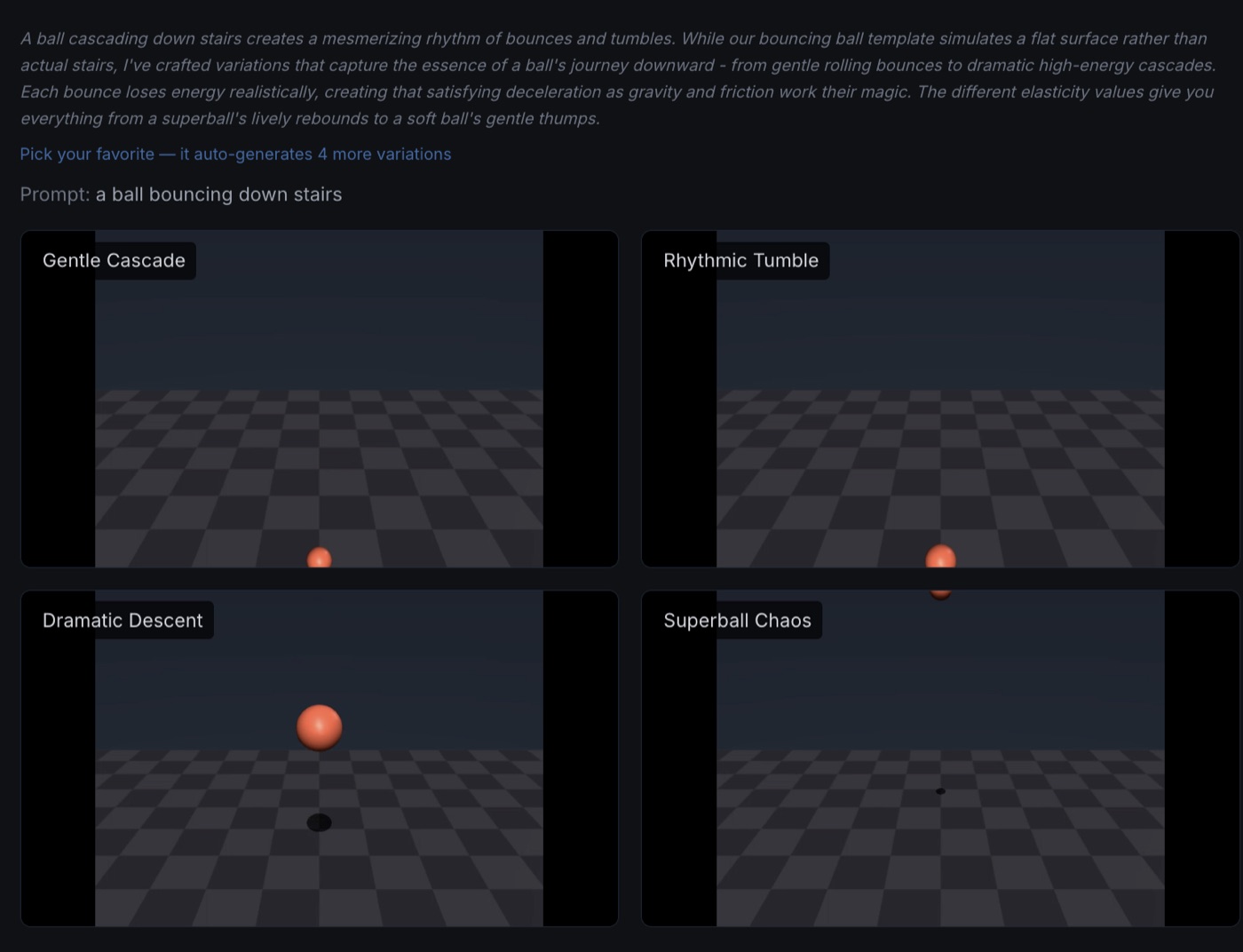

SimGen is a prompt-to-physics-simulation engine. You type a natural language prompt (“a humanoid doing a backflip on the Moon” or “a pendulum swinging in zero gravity”) and the system generates four MuJoCo simulation videos, each a different interpretation of your intent.

The interface borrows deliberately from Midjourney's creative workflow. Four variations appear. You upvote the ones that capture what you imagined. The system enters Flow Mode: hover over a favorite, click “Pick this one,” and it generates four new variations within a tight parameter range of your selection. A breadcrumb trail tracks your creative journey. An undo button lets you backtrack. It's the scientific method dressed up as a mood board.

But here's what makes it fundamentally different from image or video generation: physics is the constraint, and the constraint is the point.

Midjourney lets you imagine the impossible. Cities floating in clouds, geometry that couldn't exist, vibes that defy every known law of nature. SimGen is the opposite. Gravity is real. Mass matters. A person can jump two feet, not twenty. The laws of physics aren't limitations to work around; they're the creative medium itself. The beauty comes from what's actually possible, which (if you think about it for more than a few seconds) is already pretty wild.

How It Works Under the Hood

The Pipeline

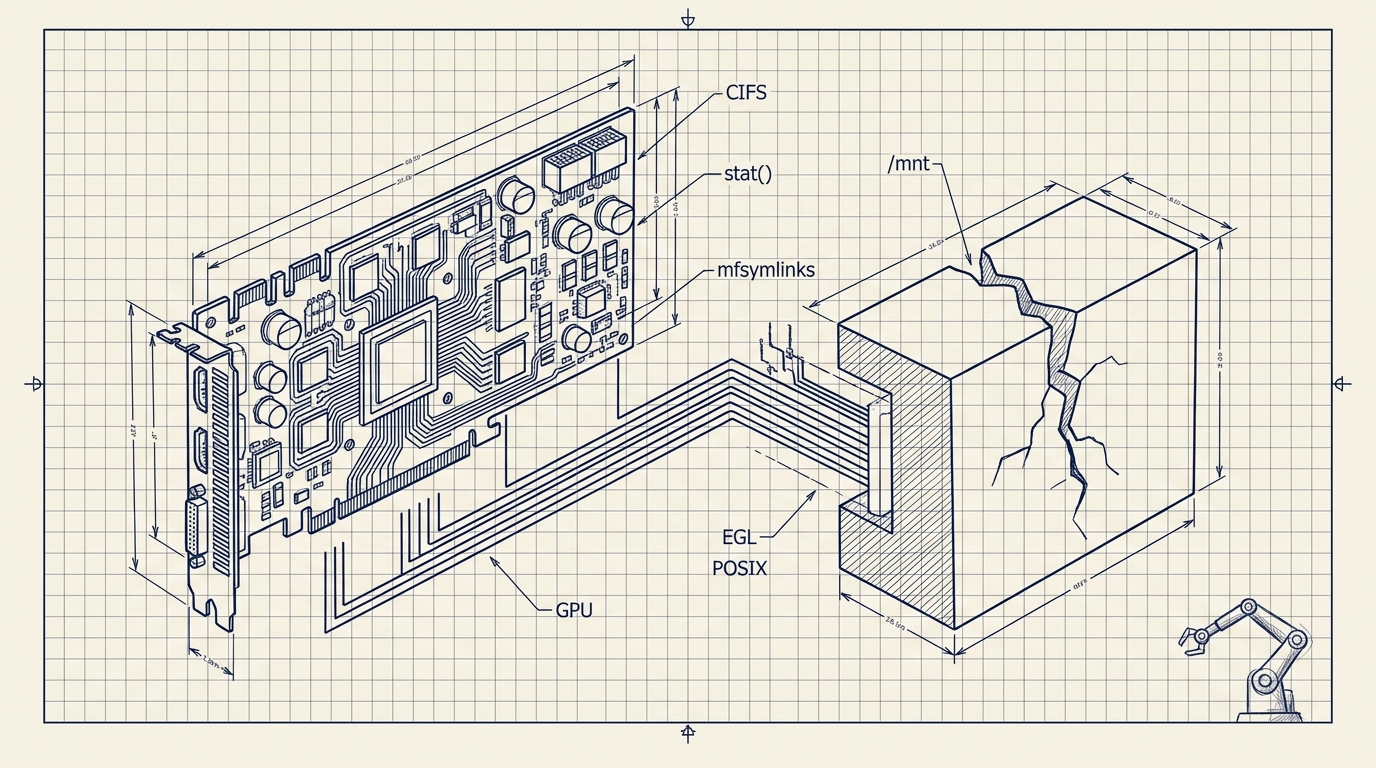

You type a prompt. Claude translates it into MuJoCo simulation parameters—gravity, mass, damping, initial velocity—constrained by real-world physics knowledge baked into its system prompt. It always generates four variations spanning a spectrum: Realistic, Cinematic, Stylized, and Pushing Limits. An H100 GPU server running headless MuJoCo with EGL rendering does the heavy lifting. For locomotion, Brax PPO policies trained on 8,192 parallel environments via JAX produce the motion.

Prompt → Claude (physics params) → H100 / MuJoCo → 4 simulation videos → You rate → Loop tightensThe Feedback Loop

Every rating feeds back into the system. Upvoted simulations become few-shot examples in Claude's context, teaching it what this particular creator considers beautiful, dramatic, or realistic. After enough iterations, the model learns your aesthetic.

The VLA Connection

If that architecture sounds familiar to anyone in robotics, it should. A Vision-Language-Action model takes visual observation and language instruction and outputs robot actions. SimGen decomposes that same pipeline, just with different actors. Claude handles language-to-action (translating prompts into physics parameters). MuJoCo handles action-to-vision (rendering those parameters into video). And the human creator handles vision-to-reward (watching the result and rating it). The action space is simulation parameters instead of joint torques, and the reward model is a person with taste instead of a loss function. But the loop is the same loop.

Why I Built It

The motivation was never to replace research tools. MuJoCo, dm_control, Isaac Sim—these are extraordinary platforms for people who know what they're doing. The motivation was to learn, to contribute our small part to anyone interested in this problem, and to open the door for people who don't have years of simulation experience.

Our small contribution was a focus on fun and aesthetics rather than accuracy or deployment. When people can create, share, and react to physics simulations the way they do with any other visual medium, you start building a collective taste for what's compelling—and that taste immediately pays off in demo reels, educational content, and the material that gets people excited about robotics in the first place. Fun is what makes the internet valuable. It's also what's been missing from simulation.

The vision is that a filmmaker prototypes a zero-gravity fight scene, a game designer tests whether a physics mechanic feels right, a student explores orbital mechanics by describing what they want to see instead of writing simulation code. To be clear, this demo isn't there yet. It's a starting point, a proof of concept for a direction we believe in.

Where It Stands

SimGen is live. The core loop works: prompt, generate, rate, refine. Five simulation templates render correctly (pendulum, bouncing ball, robot arm, cartpole, humanoid). Environment presets and visual themes give creators intuitive controls. The feedback loop learns from every rating.

The honest gap is locomotion. Walking is the first thing everyone asks for, and it's the hardest thing to get right. Our Brax-trained policies optimized for forward velocity but learned to crawl instead of walk upright, which is a classic reward-shaping problem and also, unfortunately, very funny to watch. Fixing this is priority one.

The Bigger Picture

Part 1 and Part 2 of this series were about making GPU simulation accessible to researchers. SimGen is about making physics simulation accessible to everyone. The throughline is the same belief: there are many bottlenecks in robotics and we are actively seeking, fixing, and sharing them for us or others to pick up.

SimGen is open source at github.com/Haptic-AI/simgen.